Accelerate machine learning with GCP BigQuery

How to use Google Cloud’s BigQuery data warehousing solution to make SQL relevant in the new ML age

July 17, 2020 | By: Pradipta Dhar

Machine learning (ML) has taken the world of technology by storm, and its applications have found use in almost all fields of modern life. But the journey to realize the full potential of emerging AI trends has not been easy for the enterprise. Two of the biggest challenges faced by the enterprise today are:

- Availability of the right skill set. People from the world of data analytics who deal with data regularly, and who are knowledgeable on the subject matter, have mostly worked on SQL with not enough expertise in other programming languages such as Python and R. This has been a hindrance in exploring and harnessing the true potential of machine learning in their work.

- Dealing with high volume of data for data-mining activities. The traditional tools available in the enterprise today are not equipped to handle the complexity and vast volumes of data that are an essential part of dealing with any machine-learning-related activity.

However, Google’s BigQuery tool and its applications in the field of machine learning is aimed at changing the scenario completely. By bringing together the power of machine-learning concepts and models along with the ease of leveraging the well-known capabilities of SQL, BigQuery looks to face these challenges.

Introducing Google Cloud Platform’s BigQuery ML solution

BigQuery is Google’s fully managed and serverless data warehousing solution in the Google Cloud Platform. It can handle vast volumes of data (in the unit of petabytes) and as a software as a solution (SaaS) service, also possesses machine-learning features that can be utilized by professionals with SQL knowledge and experience. BigQuery allows us to build, validate and deploy the model for predicting real-world use cases.

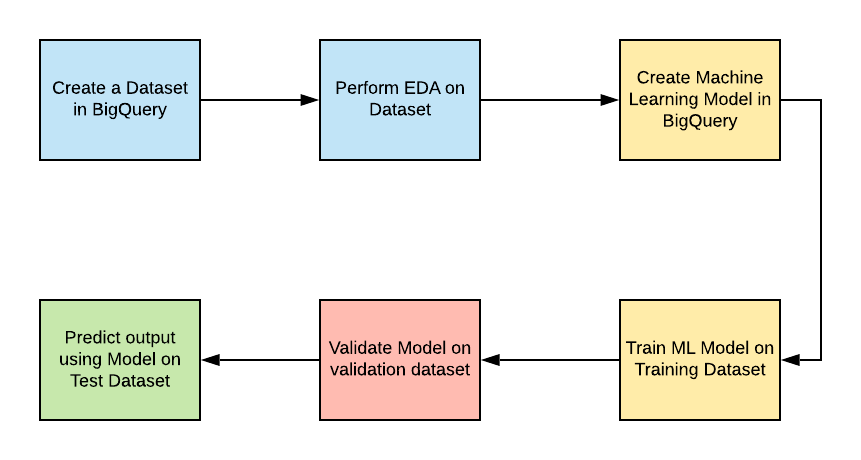

In this article, we’ll walk through the various aspects of building a machine-learning model using BigQuery. We’ll start by selecting a dataset that will be used by our model. Next, we’ll perform exploratory data analysis (EDA) on the dataset to ensure that it is clean and free of a high number of anomalous data points. Once we have the dataset ready, we’ll use approximately 60% of the dataset to create and train our model. Once we have our model trained and the model metrics are within expected thresholds, we will run the model on a validation dataset and finally use the model to predict the output for the remaining data.

Creating a model in BigQuery

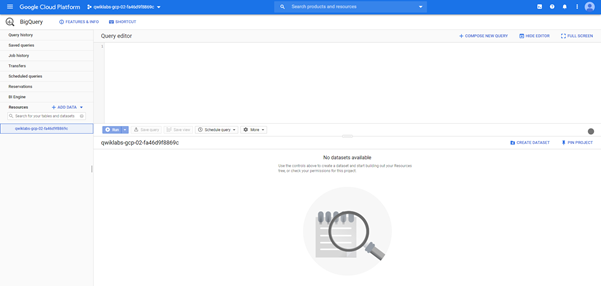

1. The first thing that is required for us to create a machine-learning model in BigQuery is a dataset that we can work with. For this purpose, we need to create a dataset that we want to work with or use a dataset that is available in BigQuery (for learning purposes).

The above window shows us the BigQuery console. All resources such as datasets and ML models can be viewed under the project name on the left under the Resources tab.

2. Once we have a dataset that we can work with, the next step is to create the machine-learning model. This is the part where we see the true potential of using BigQuery for creating our model, as we have the advantage of BigQuery’s scale, performance and ability to work with SQL to use the model.

Below is a sample query for creating a model and passing its various parameters, labels and features, and training data.

CREATE OR REPLACE MODEL `bqml.ml_model`

OPTIONS(model_type='logistic_reg') AS

SELECT

IF(

IFNULL(

IFNULL(

IFNULL(

FROM

`

WHERE

_TABLE_SUFFIX BETWEEN

LIMIT 100000;

The key parameters in the above SQL are:

- Name of the model: ‘bqml’

- The type of model: ‘binary logistic regression’ for this use case

In addition to “binary logistic regression,” Google supports other popular ML models as well. Here is a complete reference and options for the “create model” syntax.

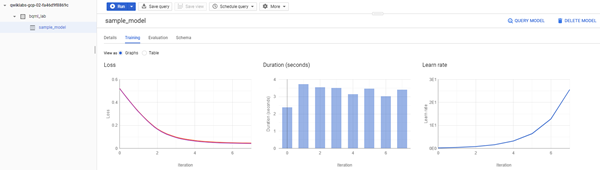

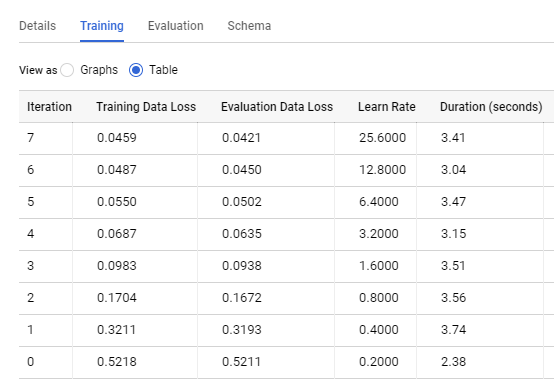

Viewing and understanding the different parameters and model details

BigQuery console provides for evaluating the various metrics associated with the model. We can then see the various metrics and model information in the BigQuery console diagrammatically and in tabular format—based on this, we can decide if we need to train the model further.

Evaluation and prediction using the designed model

Once the model is trained, we can then use queries like the below sample queries on a validation dataset and then to predict outputs of a prediction dataset.

The below query is used for validating the model as can be identified by the keyword “ml.evaluate” as well as the new date range for the dataset being fed to the query as compared to the training query.

SELECT

*

FROM

ml.EVALUATE(MODEL `bqml.ml_model`, (

SELECT

IF(<label_field> IS NULL, 0, 1) AS label,

IFNULL(<input_feature_1>, ""),

<input_feature_2>,

IFNULL(<input_feature_3>, ""),

IFNULL(<input_feature_4>, 0)

FROM

`<dataset_name_goes_here>`

WHERE

_TABLE_SUFFIX BETWEEN <date_range_goes_here>));

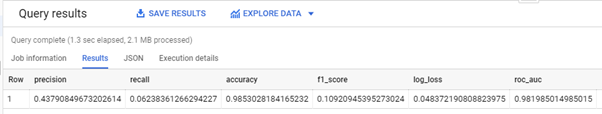

The output of the above validation query returns the following columns since we used a logistic regression model: precision, recall, accuracy, f1_score, log_loss, roc_auc.

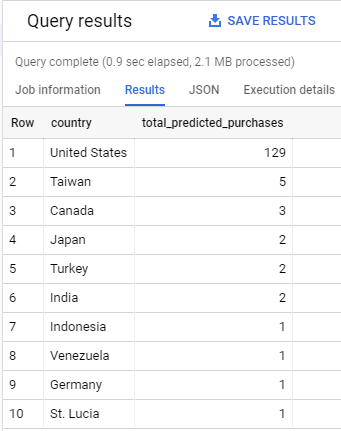

The below sample query is used for predicting the total purchases based on country for a sample dataset as can be seen from the first SELECT statement.

SELECT

country,

SUM(predicted_label) as total_predicted_purchases

FROM

ml.PREDICT(MODEL `bqml.ml_model`, (

SELECT

IFNULL(<input_feature_1>, ""),

<input_feature_2>,

IFNULL(<input_feature_3>, ""),

IFNULL(<input_feature_4>, 0)

FROM

`<dataset_name_goes_here>`

WHERE

_TABLE_SUFFIX BETWEEN <date_range_goes_here>))

GROUP BY country

ORDER BY total_predicted_purchases DESC

LIMIT 10;

The below table shows the output of the prediction query where we can see the total purchases for each country sorted in a descending order.

BigQuery is the answer for enterprises that want to accelerate ML transformation

BigQuery has helped bring the power of machine learning into the hands of business analysts and data analysts by integrating it with SQL (the bread and butter for a data analyst), all while maintaining everything in the cloud infrastructure without capital investments on infrastructure. Coupled with pay-per-usage with minimal IT support requirement, it’s a tool of choice for enterprises looking to accelerate their ML journey.

Google has also provided us a great way to learn and explore these tools using their hands-on lab sessions for various services and use cases, all of which can be accessed at Qwiklabs.

The queries and screenshots above are part of Google’s “Getting Started with BQML” lab; this lab can be accessed at “Getting Started with BQML.”

Images in this article were taken from Google Cloud Platform BigQuery.

Pradipta Dhar is a principal software engineer at TEKsystems with extensive expertise in machine learning and big data.